Understanding AI Without Coding: A New Approach to Learning

Why I started questioning how we learn AI

When people talk about learning AI, the conversation almost always starts with coding.

Python, models, frameworks.

But the more I explore this space, the more I feel there is a gap.

Most people don’t struggle with coding itself.

They struggle with understanding how AI systems actually behave.

And these are two very different things.

Coding is not the same as understanding

Learning to code teaches you how to build.

But it doesn’t necessarily teach you how systems:

make decisions

interpret instructions

react to environments

According to OECD, AI literacy is becoming a critical skill — not just for engineers, but for the broader population.

This implies something important:

Understanding AI should not be limited to technical profiles.

What most learning approaches are missing

Most current approaches focus on:

syntax

tools

technical implementation

But they often miss something fundamental: interaction !

AI is not just something you write.

It is something that behaves.

And behavior is not learned only through code.

It is learned through observation and experimentation.

What I’m observing in practice

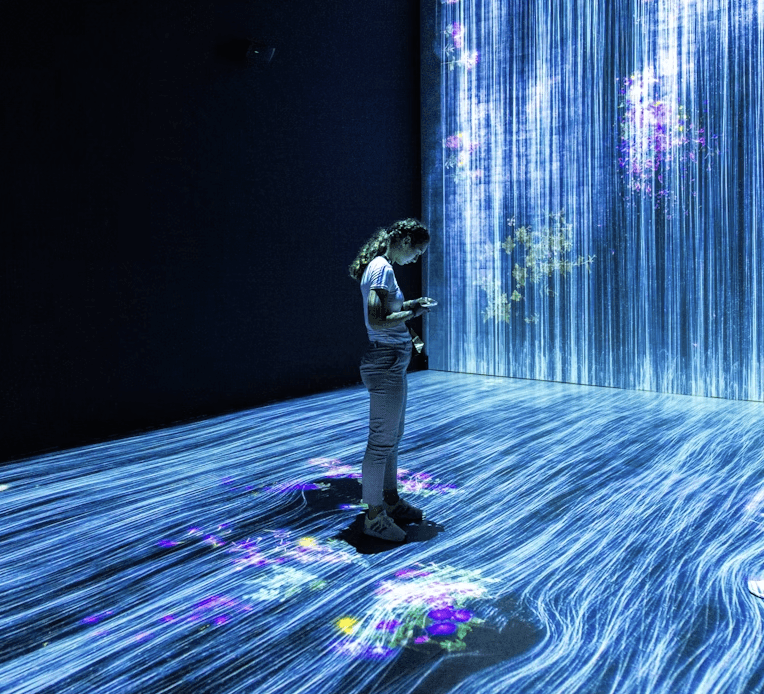

In the environments I’m exploring, especially in China, there is a different approach emerging.

AI is increasingly embedded into: devices, robots interactive systems.

Which means learners can:

see how systems react

test inputs and outputs

understand cause and effect

Instead of only writing code, they interact with systems.

And that changes the learning dynamic completely.

Why interaction matters

Research in Human-Computer Interaction consistently shows that learning improves when people can interact directly with systems rather than passively observe them.

This is particularly relevant for AI.

Because AI is not intuitive.

You don’t see what’s happening inside.

You need ways to externalize it.

Interaction makes invisible processes visible.

Robotics as a learning interface

This is where robotics becomes interesting.

Not just as a technology,

but as an interface for learning.

A robot allows you to:

give instructions

observe behavior

test hypotheses

It creates a bridge between abstract concepts and real-world understanding.

The opportunity beyond technical education

This shift opens a much larger opportunity.

Learning AI is no longer just about training engineers.

It becomes about:

enabling non-technical profiles

helping people understand systems they will interact with

building intuition, not just skills

According to the World Economic Forum, the future of work will require broader understanding of AI across all roles.

Which means:

We need new ways to learn.

The gap I keep noticing

What I often see, especially in Europe, is a strong focus on:

coding

certifications

technical mastery

But less emphasis on:

intuition

interaction

system understanding

This creates a barrier.

People feel that AI is complex, technical and inaccessible.

When in reality, part of it can be understood in much more intuitive ways.

Where this is going

We are moving toward a world where:

AI is embedded in everyday tools

systems act autonomously

humans interact with intelligent environments

Understanding AI will become less about writing code,

and more about understanding behavior.

Final thought

Learning AI should not start with syntax.

It should start with interaction.

Because before building intelligent systems,

we need to understand how to engage with them.